Computational Physics

Motivation

I once took a class on computational physics in grad school, but the class was taught using Fortran 90, which is usually used for intense numerical applications in academia and maybe industry, but not much else. I wanted to learn some Python and also review and learn more about computational techniques, so I decided to work my way through Mark Newman’s Computational Physics. My solutions to the exercises can be found here.

Thoughts

The most interesting thing I learned was the idea of Richardson extrapolation. The book introduces this through Romberg integration and the Bulirsch-Stoer method of solving ordinary differential equations.

Here’s the basic idea. Suppose you have a method to approximate some quantity , and that this method depends on a small parameter in such a way that the approximations get better and better the smaller is. As a concrete example, think of computing a definite integral and as the step size in a numerical integration method such as the trapezoidal rule.

The idea of Richardson extrapolation is that by approximating with two different (appropriately related) values of , you can combine them to get a new estimate with a much smaller error estimate. How those values of should be related and how you combine them depend on the specifics of what you’re computing.

For example, in Romberg integration you compute an estimate with step size , then compute a second estimate with a step size . You get a new, better estimate by adding to a term proportional to .

Examples

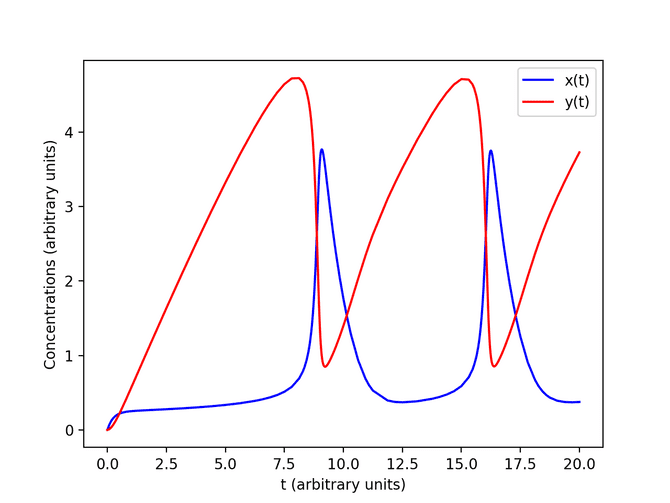

Adaptive Bulirsch-Stoer

In the example above, we solve the coupled, nonlinear ordinary differential equations

with with initial conditions and accuracy of per unit time. We solved this system using an adaptive Bulirsch-Stoer method. See my solution here.

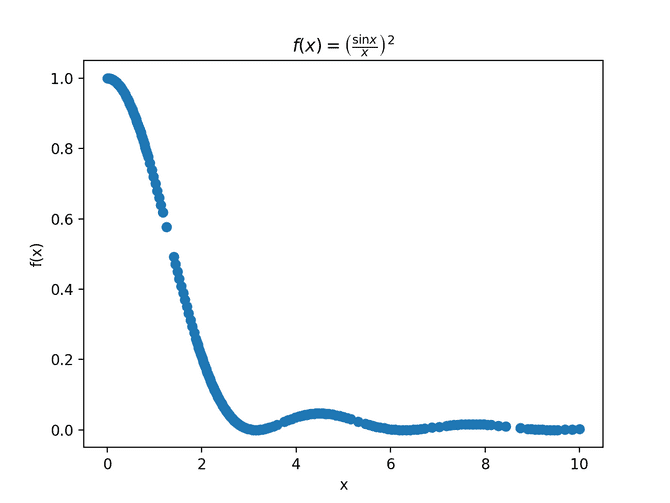

Adaptive trapezoidal rule

Another example is using an adaptive trapezoidal rule to estimate the value of

We use the trapezoidal rule to compute the integral, but the step size is automatically adjusted so that the error estimate for each integration slice is no greater than some target value (so that the overall error is bounded). See my solution here.

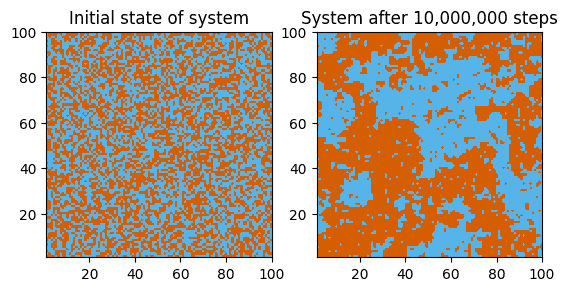

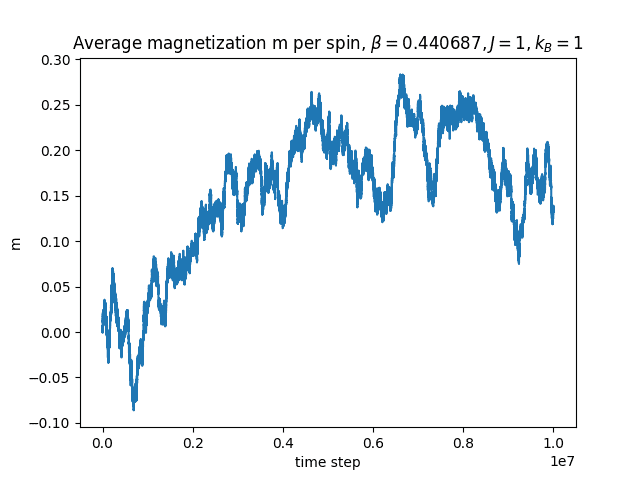

2D Ising model

Of course we also simulate the well-known 2D ferromagnetic Ising model. We perform a Markov chain Monte Carlo simulation of the Ising model on a 100 × 100 square lattice with zero magnetic field and nearest-neighbor interactions.